Solutions

iEi: TANK AIoT Development Kit

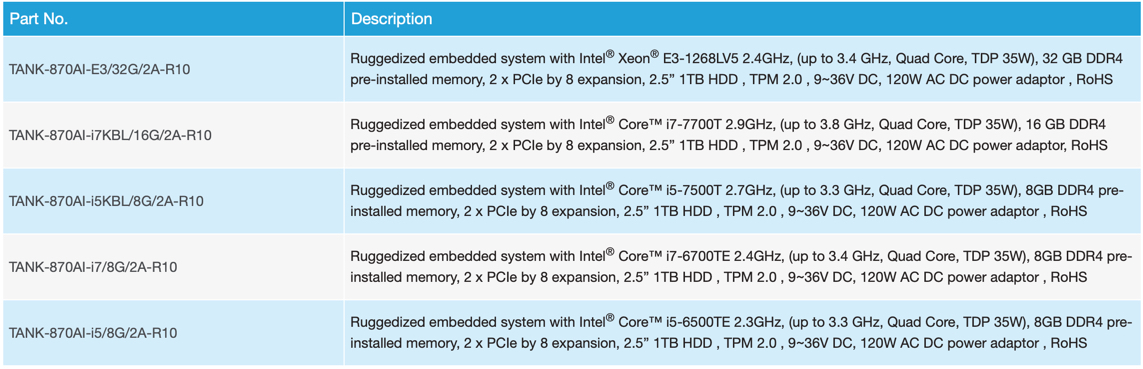

Intel IoT RFP Ready KitsDescription

Smart Choice for Inference System With AI

Artificial Intelligence, AI, is changing our lives from the past to the future. It enables machine learning by using a variety of training models to simulate and infer the status or appearance of objects. For example, the inference system with the video analysis model can perform face and vehicle license plate analysis for safety and security purposes.

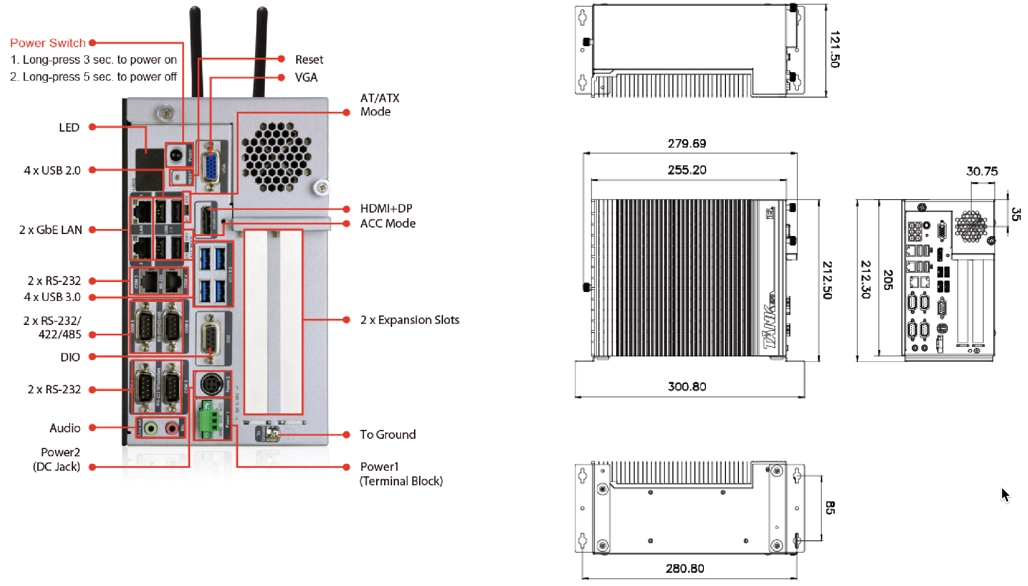

The TANK AIoT Dev. Kit features rich I/O and dual PCIe slots (x16) to support add-ons like the Acceleration cards (Mustang-F100-A10 & Mustang-V100-MX8) or the PoE (IPCIE-4POE) to enhance performance and function for various applications.

IoT Solution Application

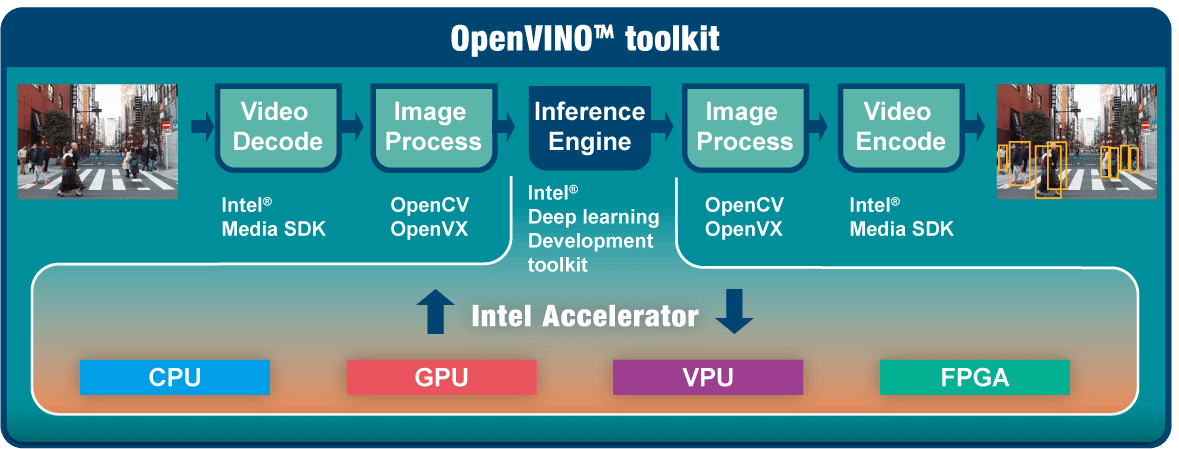

Intel® Distribution of OpenVINO™ toolkit

Intel® Distribution of OpenVINO™ toolkit is based on convolutional neural networks (CNN), the toolkit extends workloads across multiple types of Intel®platforms and maximizes performance.

It can optimize pre-trained deep learning models such as Caffe, MXNET, and Tensorflow. The tool suite includes more than 20 pre-trained models, and supports 100+ public and custom models (includes Caffe*, MXNet, TensorFlow*, ONNX*, Kaldi*) for easier deployments across Intel® silicon products (CPU, GPU/Intel®Processor Graphics, FPGA, VPU).

IoT Solution Specification

TANK AIoT Developer Kit

- 6th/7th Gen Intel® Core™/Xeon® processor platform with Intel®Q170/C236 chipset and DDR4 memory

- Dual independent display with high resolution support

- Rich high-speed I/O interfaces on one side for easy installation

- On-board internal power connector for providing power to add-on cards

- Great flexibility for hardware expansion

- Pre-installed Ubuntu 16.04 LTS

- Pre-installed Intel® Distribution of Open Visual Inference & Neural Network Optimization (OpenVINO™) toolkit,Intel® Media SDK, Intel®System Studio and Arduino® Create